2026

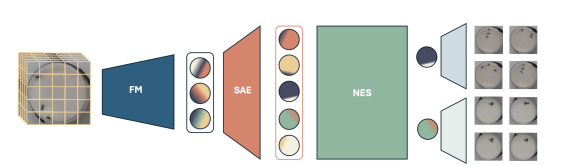

“Exploratory Causal Inference in SAEnce”

T. Mencattini*, R. Cadei*, F. Locatello

ICLR, 2026.

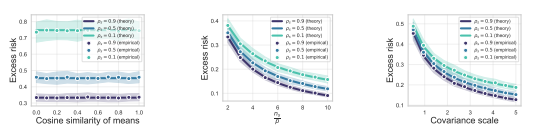

“High-dimensional Analysis of Synthetic Data Selection”

P. Rezaei, F. Kovacevic, F. Locatello*, M. Mondelli*

ICLR, 2026.

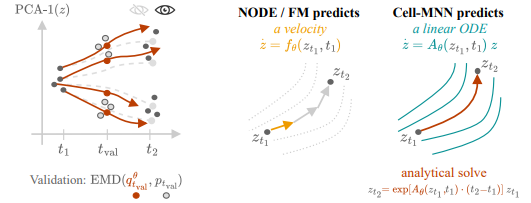

“Learning explicit single-cell dynamics using ODE representations”

J.P. von Bassewitz, A. Pervez, M. Fumero, M. Robinson, T. Karaletsos, F. Locatello

ICLR, 2026.

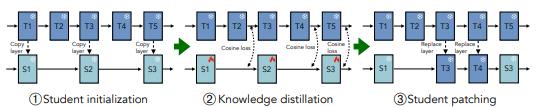

“Boomerang Distillation Enables Zero-Shot Model Size Interpolation”

S. Kangaslahti, N. V. Nayak, J. Geuter, M. Fumero, F. Locatello, D. Alvarez-Melis

ICLR, 2026.

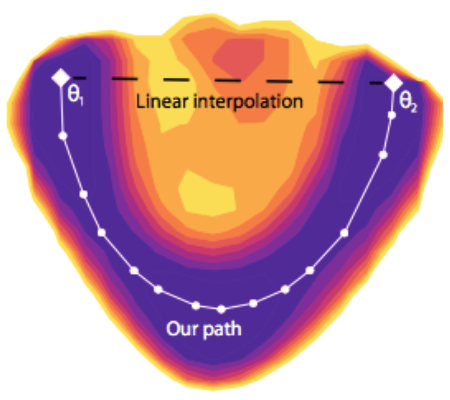

“Navigating the Latent Space Dynamics of Neural Models“

M. Fumero, L. Moschella, E. Rodolà*, F. Locatello*

ICLR, 2026.

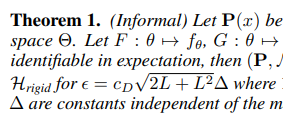

“Statistical and Structural Identifiability in Self-Supervised Learning”

W. Nelson, M. Fumero, T. Karaletsos, F. Locatello

ICLR, 2026.

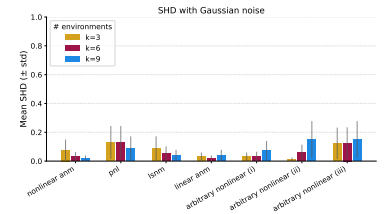

“On the identifiability of causal graphs with multiple environments”

F. Montagna

ICLR, 2026.

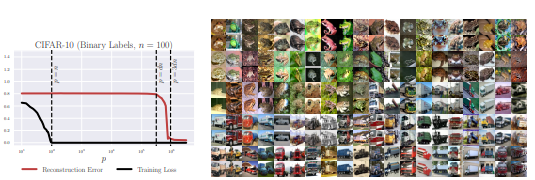

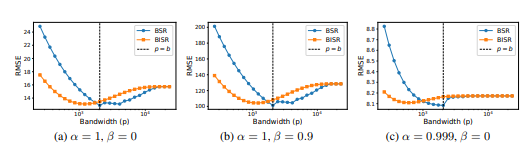

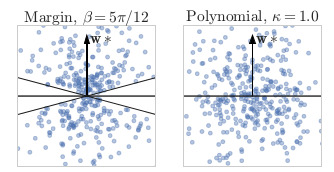

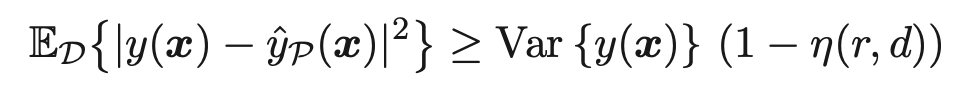

“A Law of Data Reconstruction for Random Features (and Beyond)“

L. Iurada*, S. Bombari*, T. Tommasi, M. Mondelli*

ICLR, 2026.

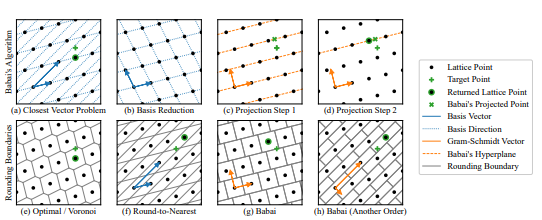

“The Geometry of LLM Quantization: GPTQ as Babai’s Nearest Plane Algorithm”

J. Chen, Y. Shabanzadeh, E. Crnčević, T. Hoefler, D. Alistarh

ICLR, 2026.

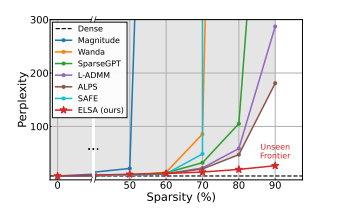

“The Unseen Frontier: Pushing the Limits of LLM Sparsity with Surrogate-Free ADMM”

K. Lee, H. Jang, D. Lee, D. Alistarh, N. Lee

ICLR, 2026.

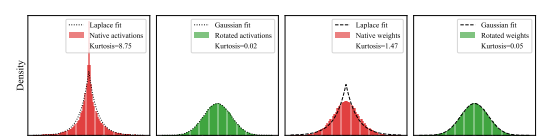

“Bridging the Gap Between Promise and Performance for FP4 Quantization”

V. Egiazarian, R. L. Castro, D. Kuznedelev, A. Panferov, S. Ashkboos, E. Kurtic, S. Pandit, A. N. Marques, M. Kurtz, T. Hoefler, D. Alistarh

ICLR, 2026.

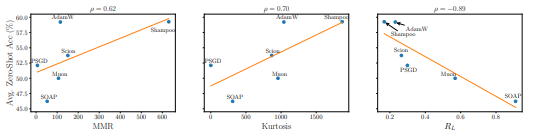

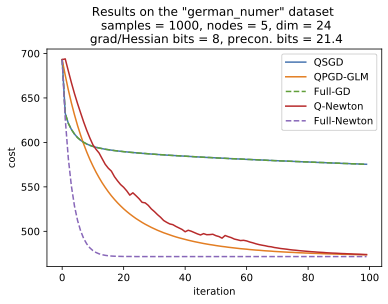

“Beyond Outliers: A Study of Optimizers Under Quantization”

G. Vlassis, S. Ashkboos, A. Volkova, T. Hoefler, D. Alistarh

ICLR, 2026.

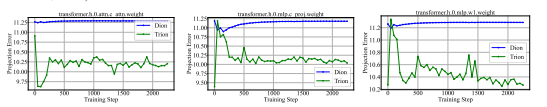

“FFT-Based Dynamic Subspace Selection for Low-Rank Adaptive Optimization of Large Language Models”

I. Modoranu, M. Safaryan, E. Schultheis, M. Ryabinin, A. Chumachenko, D. Alistarh

ICLR, 2026.

“Back to Square Roots: An Optimal Bound on the Matrix Factorization Error for Multi-Epoch Differentially Private SGD”

N. Kalinin, J. Upadhyay, R. McKenna, C. H. Lampert

ICLR, 2026.

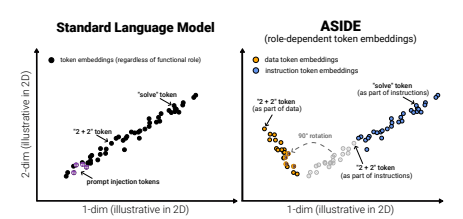

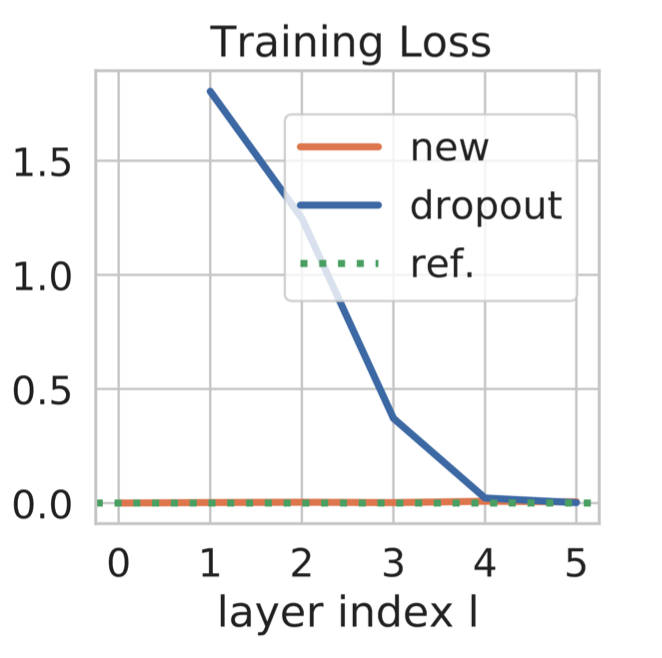

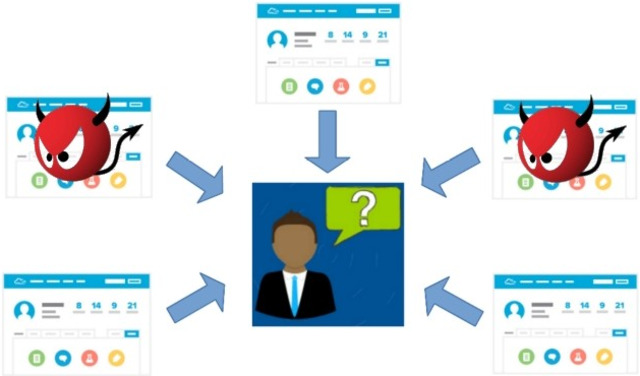

“ASIDE: Architectural Separation of Instructions and Data in Language Models”

E. Zverev, E. Kortukov, A. Panfilov, A. Volkova, R. Tabesh, S. Lapuschkin, W. Samek, C. H. Lampert

ICLR, 2026.

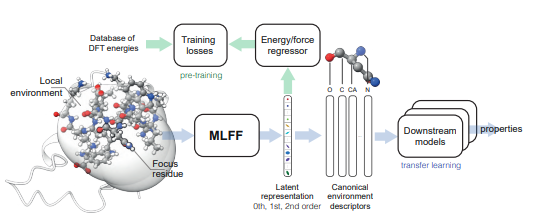

“Representing local protein environments with machine learning force fields”

M. Bojan, S. Vedula, A. Maddipatla, N. B. Sellam, F. Napoli, P. Standee, A. M. Bronstein

ICLR, 2026.

2025

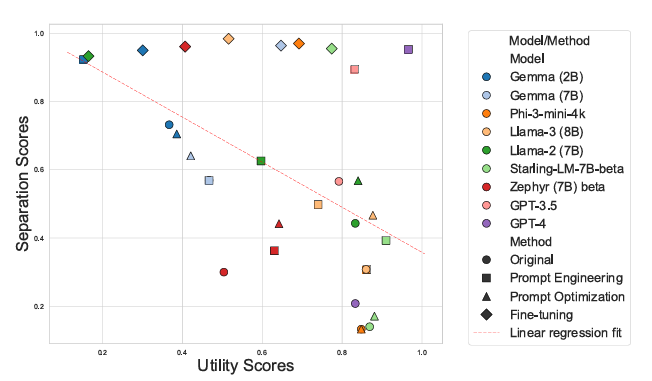

“Can LLMs Separate Instructions From Data? And What Do We Even Mean By That?”

E. Zverev, S. Abdelnabi, S. Tabesh, M. Fritz, Christoph H. Lampert

ICLR, 2025

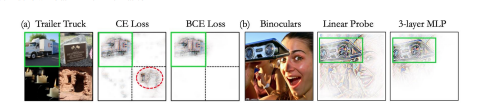

“How to Probe: Simple Yet Effective Techniques for Improving Post-hoc Explanations”

S. Gairola, M. Böhle, F. Locatello, B. Schiele

ICLR, 2025

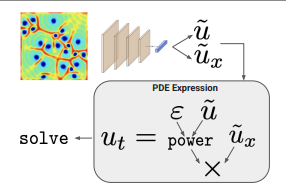

“Mechanistic PDE Networks for Discovery of Governing Equations”

A. Pervez, E. Gavves, F. Locatello

ICML, 2025

“Prediction-Powered Causal Inference”

R. Cadei, I. Demirel, P. De Bartolomeis, L. Lindorfer, S. Cremer, C. Schmid, F. Locatello

NeurIPS, 2025

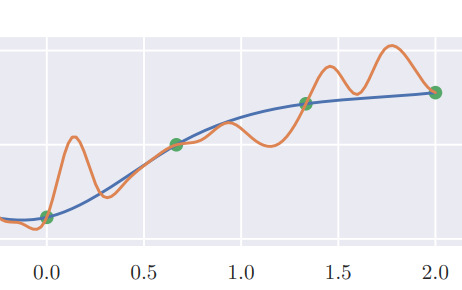

“Connecting neural models latent geometries with relative geodesic representations”

H. Yu, B. Inal, G. Arvanitidis, S. Hauberg, F. Locatello, M. Fumero

NeurIPS, 2025

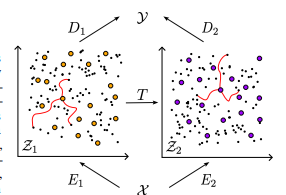

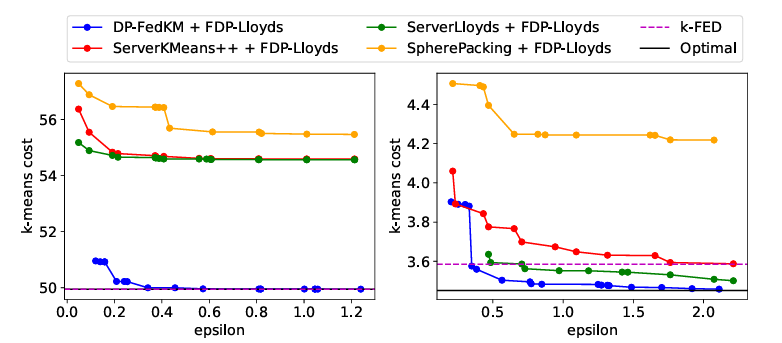

“Differentially Private Federated k-Means Clustering with Server-Side Data”

J. Scott, C. H. Lampert, D. Saulpic

ICML, 2025

“Logic Gate Neural Networks are Good for Verification”

F. Kresse, E. Yu, C. H. Lampert, T. A. Henzinger

NeuS, 2025

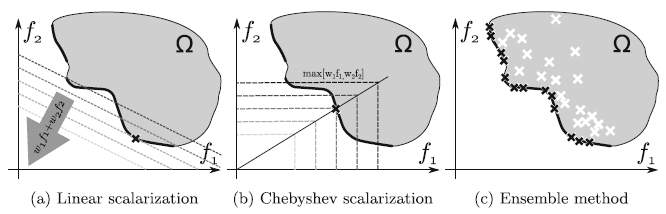

“Generalization in Multi-Objective Machine Learning”

P. Súkeník, C. H. Lampert

Neural Computing & Applications, 2025

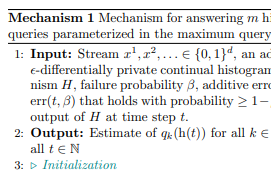

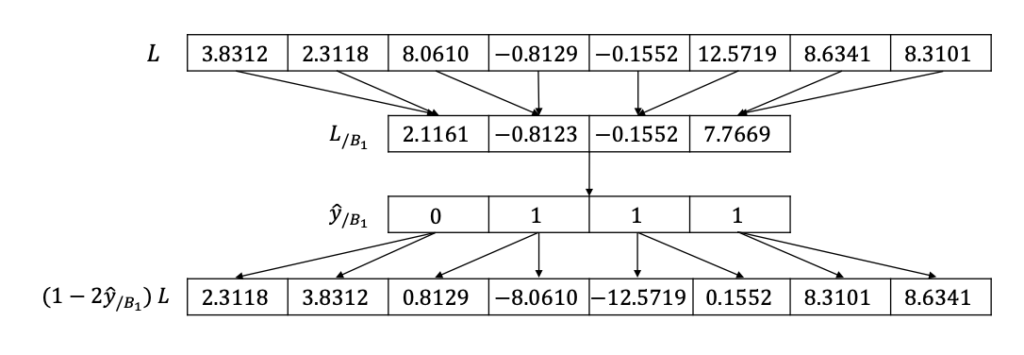

“Differentially Private Continual Release of Histograms and Related Queries”

M. Henzinger, A. R. Sricharan, T. A. Steiner

Proceedings of The 28th International Conference on Artificial Intelligence and Statistics, 2025

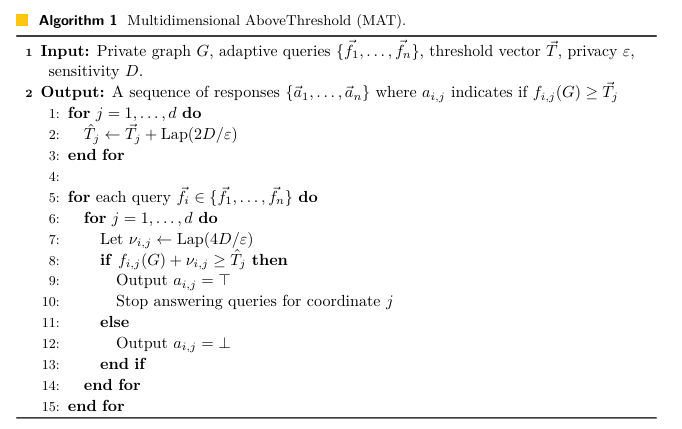

“Near-Optimal Differentially Private Graph Algorithms via the Multidimensional Above Threshold Mechanism”

L. Dhulipala, M. Henzinger, G. Z. Li, Q. C. Liu, A. R. Sricharan, L. Zhu

ESA 2025

“Improved Differentially Private Continual Observation Using Group Algebra”

M. Henzinger, J. Upadhyay

SODA 2025

2024

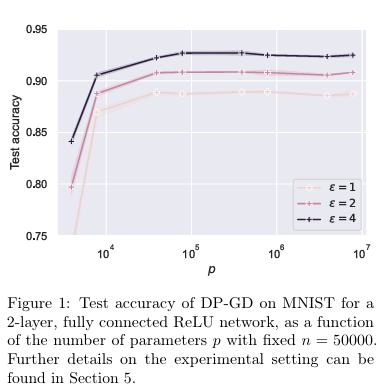

“Privacy for Free in the Over-Parameterized Regime“

S. Bombari, M. Mondelli

arXiv, 2024

2023

2022

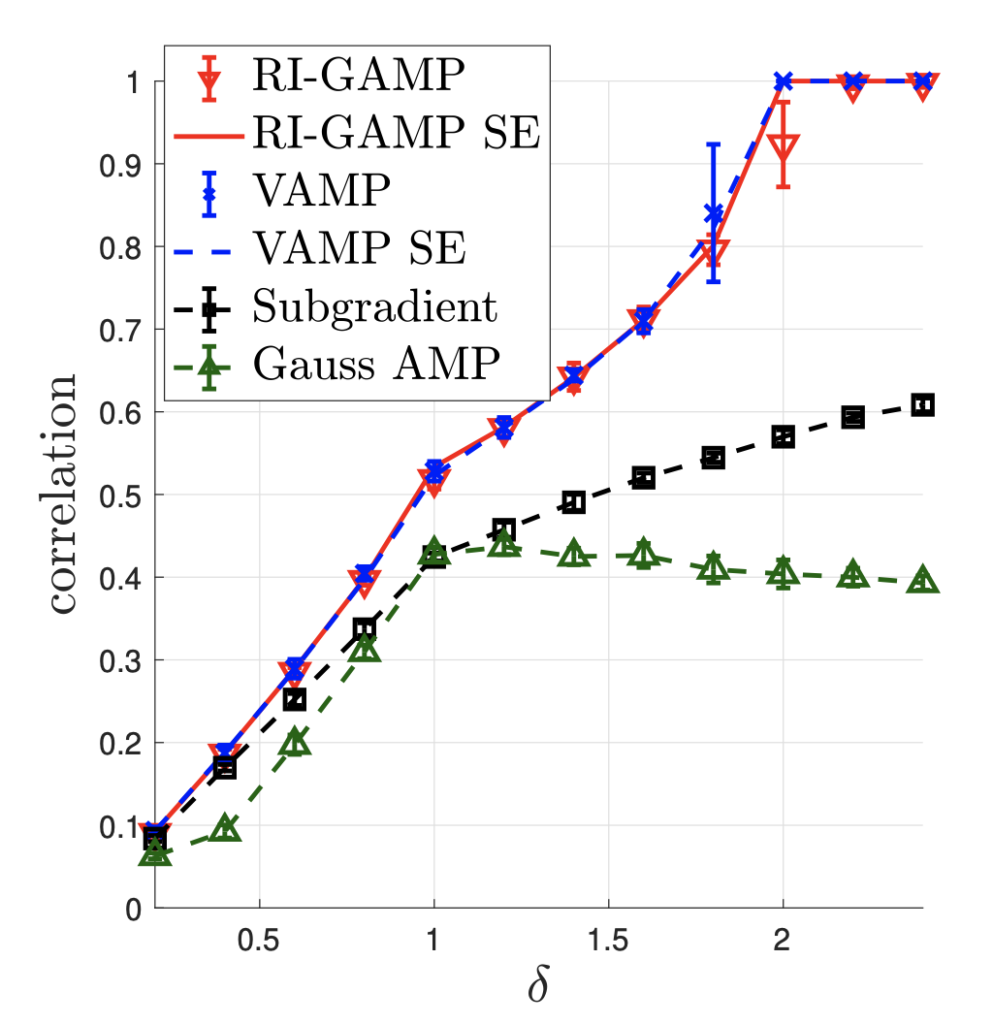

“Estimation in Rotationally Invariant Generalized Linear Models via Approximate Message Passing“

R. Venkataramanan, K. Kögler, and M. Mondelli

ICML, 2022

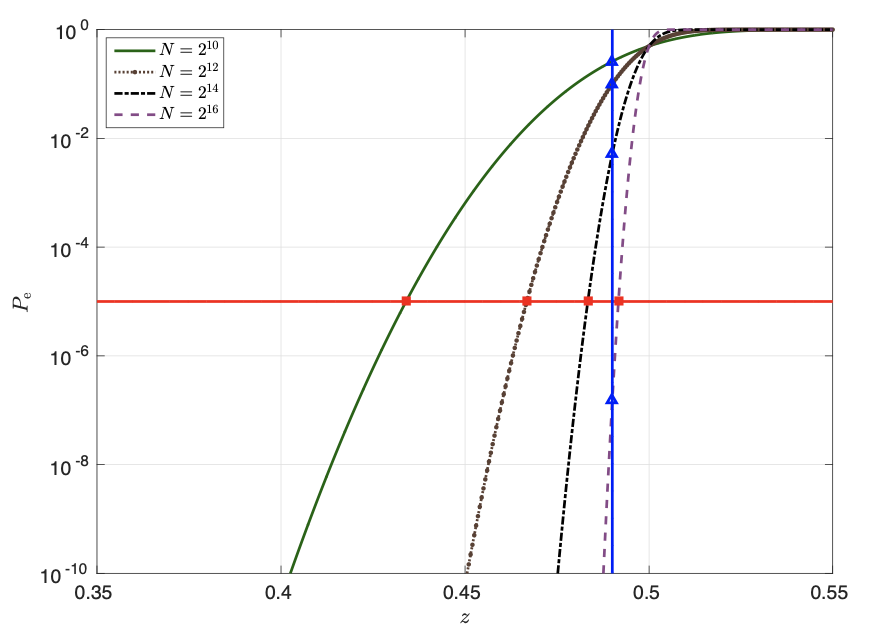

“Polar Coded Computing: The Role of the Scaling Exponent“

D. Fathollahi, M. Mondelli

ISIT, 2022

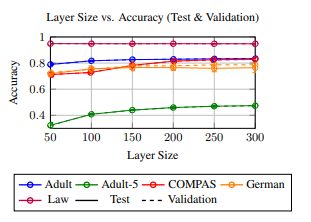

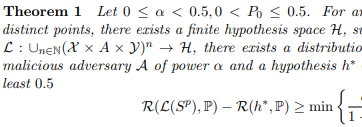

“Fairness-Aware PAC Learning from Corrupted Data”

N. Konstantinov, C. H. Lampert

JMLR, 2022

“Almost-Orthogonal Layers for Efficient General-Purpose Lipschitz Networks”

B. Prach, C. H. Lampert

ECCV, 2022

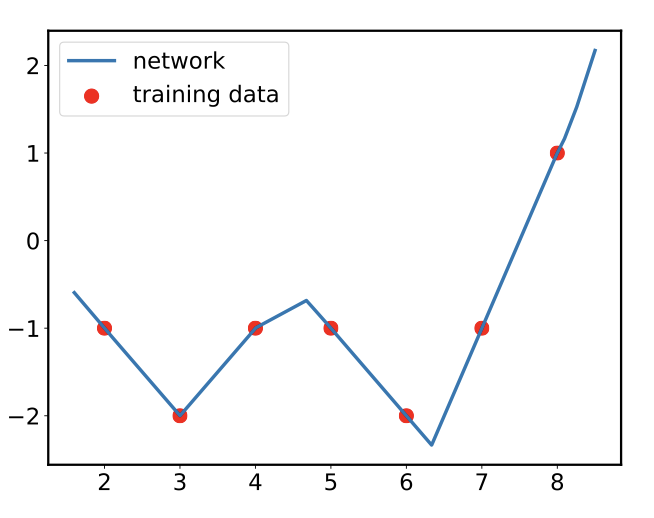

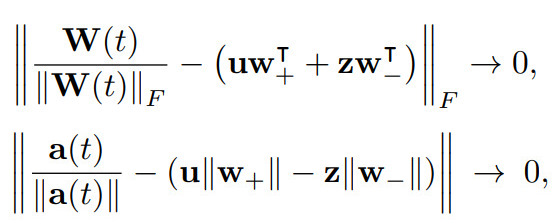

“Mean-field Analysis of Piecewise Linear Solutions for Wide ReLU Networks“

A. Shevchenko, V. Kungurtsev, M. Mondelli

JMLR, 2022

2021

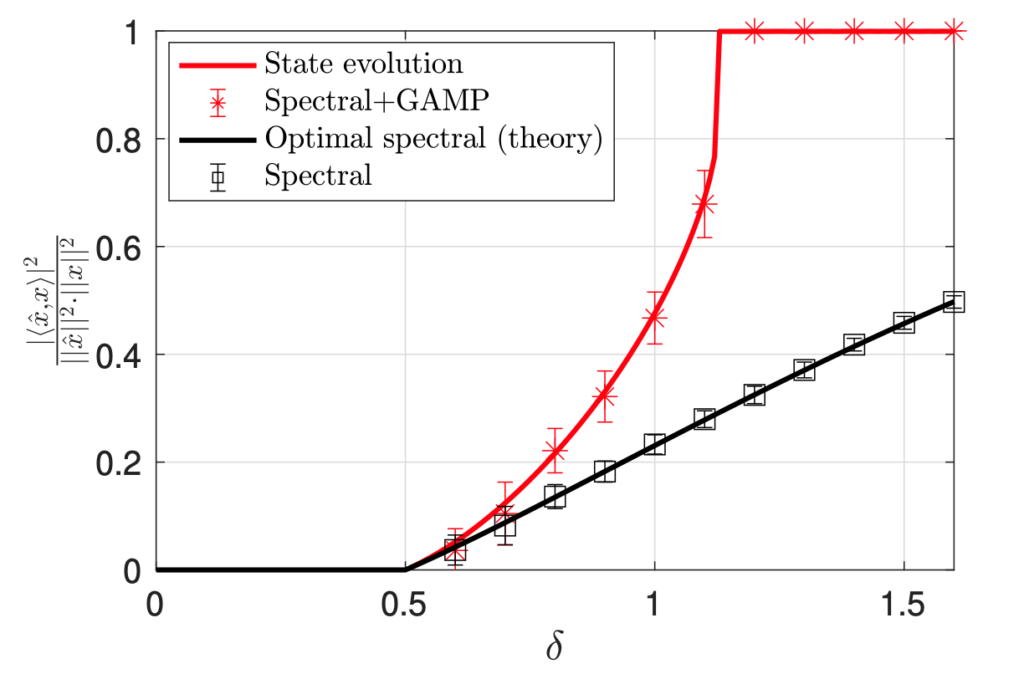

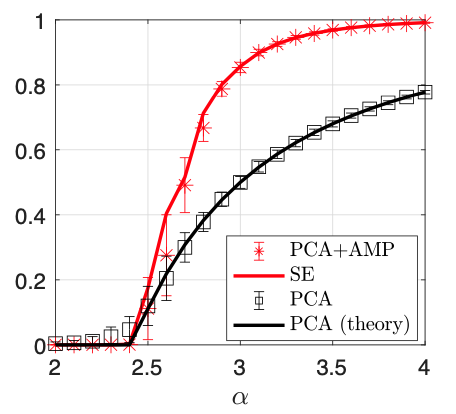

“Approximate Message Passing with Spectral Initialization for Generalized Linear Models“

M. Mondelli, R. Venkataramanan

AISTATS, 2021

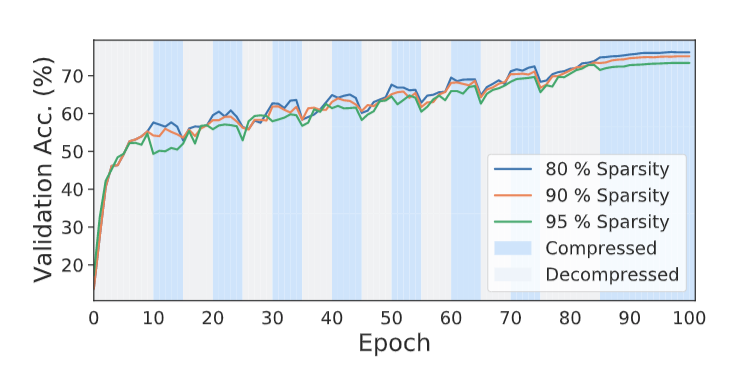

“AC/DC: Alternating Compressed/DeCompressed Training of Deep Neural Networks”

A. Peste, E. Iofinova, A. Vladu, D. Alistarhx

NeurIPS, 2021

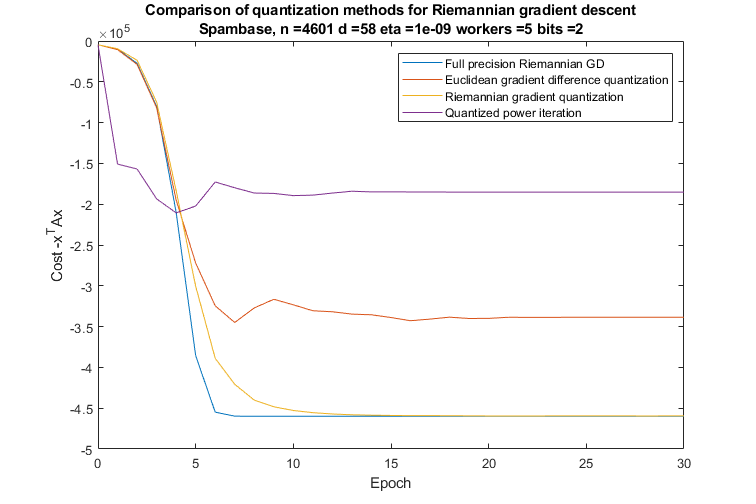

“Distributed Principal Component Analysis with Limited Communication“

F. Alimisis, P. Davies, B. Vandereycken, D. Alistarh

NeurIPS, 2021

“When Are Solutions Connected in Deep Networks?“

Q. Nguyen, P. Bréchet, M. Mondelli

NeurIPS, 2021

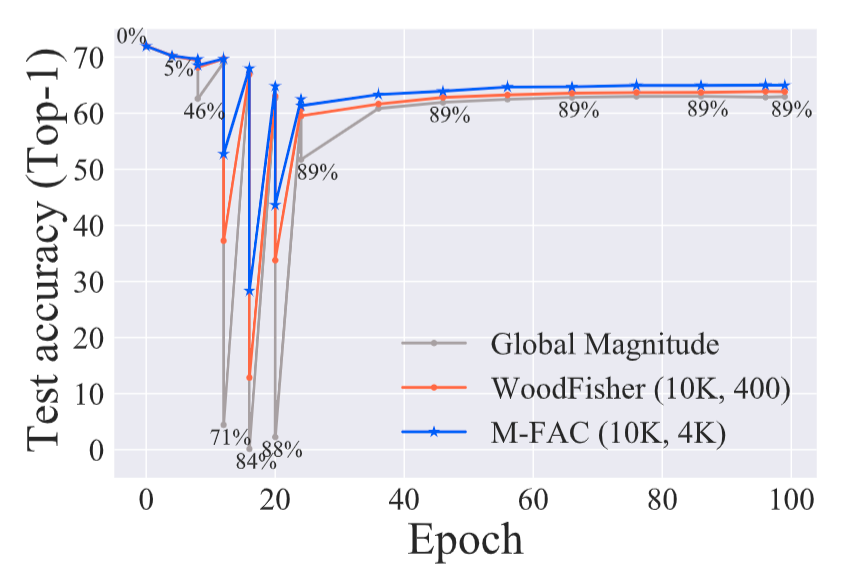

“M-FAC: Efficient Matrix-Free Approximations of Second-Order Information“

E. Frantar, E. Kurtic, D. Alistarh

NeurIPS, 2021

“The inductive bias of ReLU networks on orthogonally separable data”

M. Phoung, C. H. Lampert

ICLR, 2021

“Byzantine-Resilient Non-Convex Stochastic Gradient Descent“

Z. Allen-Zhu, F. Ebrahimianghazani, J. Li, D. Alistarh

ICLR, 2021

“Towards Tight Communication Lower Bounds for Distributed Optimisation“

J. H. Korhonen, D. Alistarh

NeurIPS, 2021

“Asynchronous Decentralized SGD with Quantized and Local Updates”

G. Nadiradze, A. Sabour, P. Davies, S. Li, D. Alistarh

NeurIPS, 2021

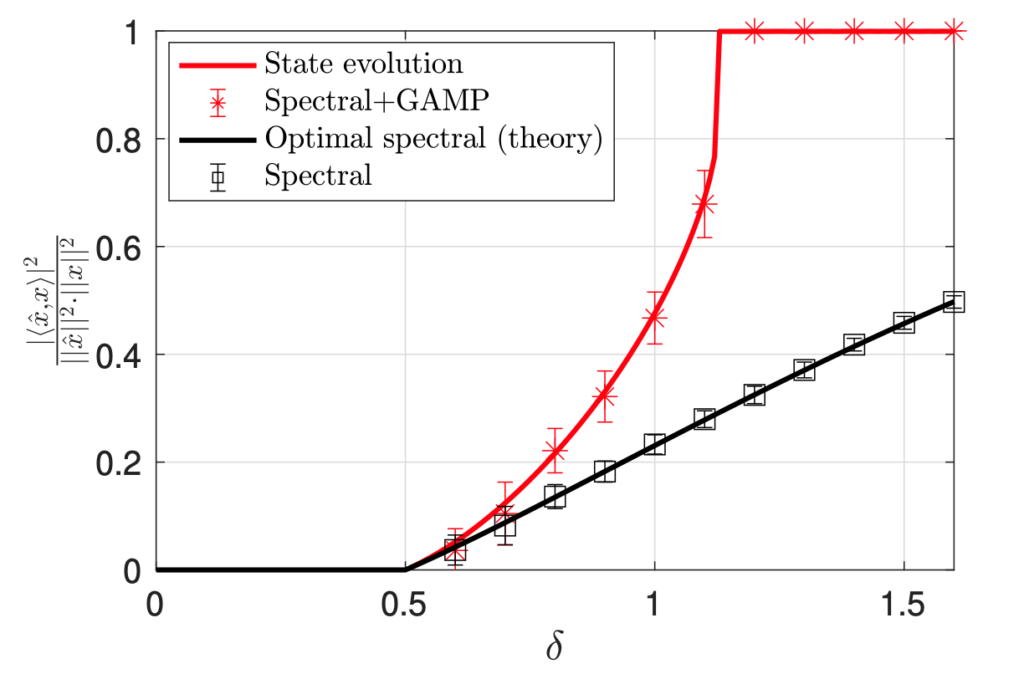

“PCA Initialization for Approximate Message Passing in Rotationally Invariant Models“

M. Mondelli, R. Venkataramanan

NeurIPS, 2021

“Approximate Message Passing with Spectral Initialization for Generalized Linear Models”

M. Mondelli, R. Venkataramanan

AISTATS, 2021

“Tight Bounds on the Smallest Eigenvalue of the Neural Tangent Kernel for Deep ReLU Networks”

Q. Nguyen, M. Mondelli, G. F. Montufar

ICML, 2021

“Communication-Efficient Distributed Optimization with Quantized Preconditioners”

F. Alimisis, P. Davies, D. Alistarh

ICML, 2021

“Genomic architecture and prediction of censored time-to-event phenotypes with a Bayesian genome-wide analysis”

S. E. Ojavee, A. Kousathanas, D. T. Banos, E. J. Orliac, M. Patxot, K. Läll, R. Mägi, K. Fischer, Z. Kutalik, M. R. Robinson

Nature Communications

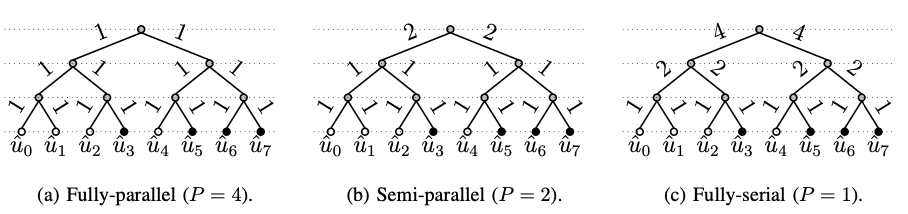

“Parallelism versus Latency in Simplified Successive-Cancellation Decoding of Polar Codes”

S. A. Hashemi, M. Mondelli, A. Fazeli, A. Vardy, J. Cioffi, A. Goldsmith

ISIT, 2021

“Sparse Multi-Decoder Recursive Projection Aggregation for Reed-Muller Codes”

D. Fathollahi, N. Farsad, S. A. Hashemi, M. Mondelli

ISIT, 2021

“Elastic Consistency: A Practical Consistency Model for Distributed Stochastic Gradient Descent“

G. Nadiradze, I. Markov, B. Chatterjee, V. Kungurtsev, D. Alistarh

AAAI, 2021

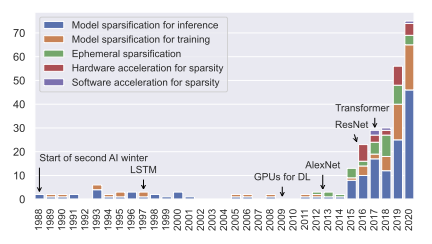

“Sparsity in Deep Learning: Pruning and growth for efficient inference and training in neural networks”

T. Hoefler, D. Alistarh, T. Ben-Nun, N. Dryden, A. Peste

JMLR, 2021

“Sublinear Latency for Simplified Successive Cancellation Decoding of Polar Codes”

M. Mondelli, S. A. Hashemi, J. Cioffi, A. Goldsmith

IEEE Transactions on Wireless Communications, 2021

“Optimal Combination of Linear and Spectral Estimators for Generalized Linear Models”

M. Mondelli, C. Thrampoulidis, R. Venkataramanan

FoCM, 2021

2020

“Global Convergence of Deep Networks with One Wide Layer Followed by Pyramidal Topology”

Q. Nguyen, M. Mondelli

NeurIPS, 2020

“WoodFisher: Efficient Second-Order Approximation for Neural Network Compression“

S. P. Singh, D. Alistarh

NeurIPS, 2020

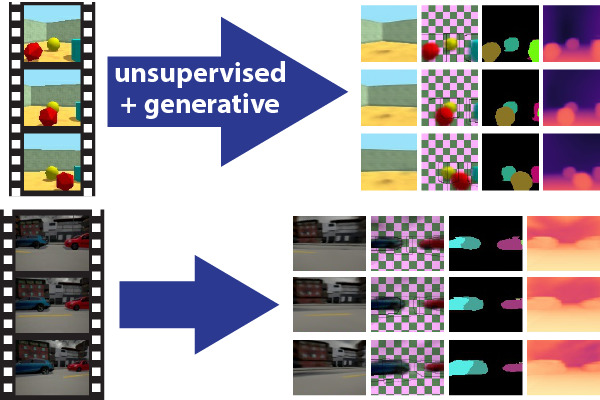

“Unsupervised object-centric video generation and decomposition in 3D”

P. Handerson, C. H. Lampert

NeurIPS, 2020

“Relaxed Scheduling for Scalable Belief Propagation“

V. Aksenov, D. Alistarh, J. H. Korhonen

NeurIPS, 2020

“Binary Linear Codes With Optimal Scaling: Polar Codes With Large Kernels”

A. Fazeli, H. Hassani, M. Mondelli, A. Vardy

IEEE Transactions on Information Theory, 2020

“Does SGD Implicitly Optimize for Smoothness?”

V. Volhejn, C. H. Lampert

GCPR, 2020

“Landscape Connectivity and Dropout Stability of SGD Solutions for Over-parameterized Neural Networks“

A. Shevchenko, M. Mondelli

ICML, 2020

“On the Sample Complexity of Adversarial Multi-Source PAC Learning“

N. Konstantinov, E. Frantar, D. Alistarh, C. Lampert

ICML, 2020

“Probabilistic inference of the genetic architecture underlying functional enrichment of complex traits“

M. Patxot, M. Robinson

Nature Communications, 2020

“Bayesian reassessment of the epigenetic architecture of complex traits”

D. Trejo Banos, M. Robinson

Nature Communications, 2020

“Functional vs. parametric equivalence of ReLU networks“

M. Phoung, C. H. Lampert

ICLR, 2020

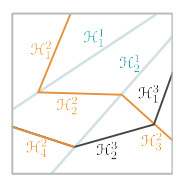

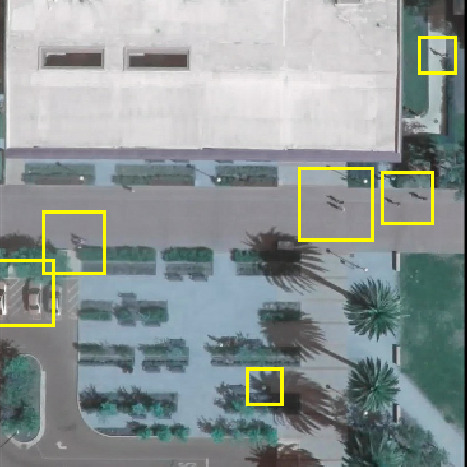

“Localizing Grouped Instances for Efficient Detection in Low-Resource Scenarios”

A. Royer, C. H. Lampert

WACV, 2020

2019

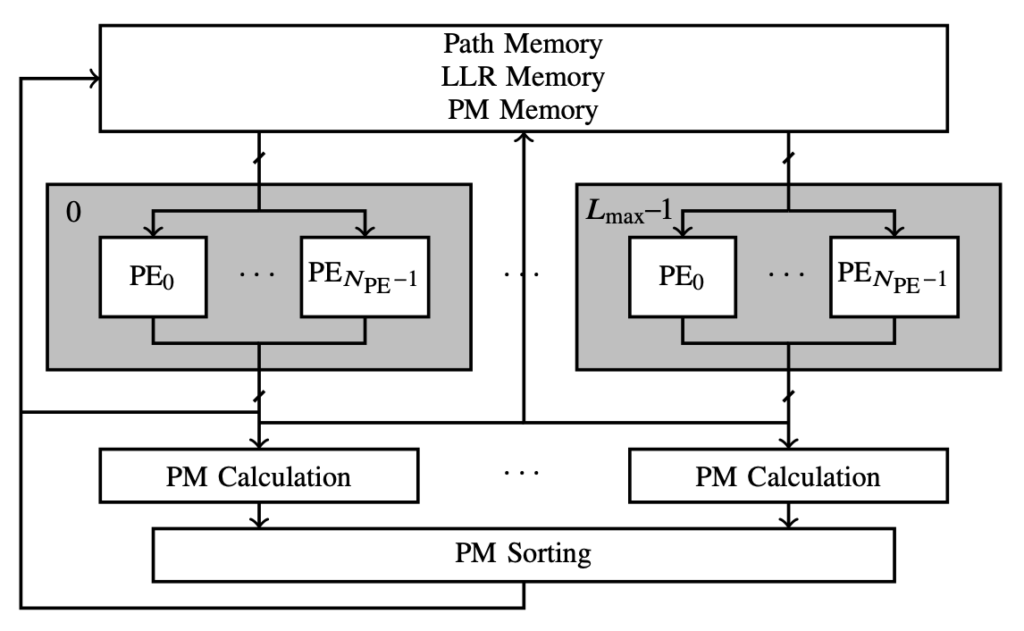

“Rate-flexible fast polar decoders”

S. A. Hashemi, C. Condo, M. Mondelli, W. J. Gross

IEEE Transactions on Signal Processing

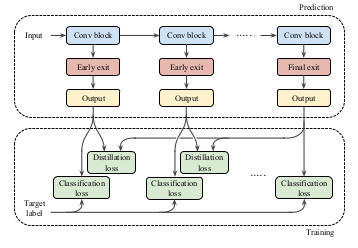

“Distillation-Based Training for Multi-Exit Architectures”

M. Phuong, C. H. Lampert

ICCV, 2019

“Towards Understanding Knowledge Distillation”

M. Phuong, C. H. Lampert

ICML, 2019

“On the Connection Between Learning Two-Layer Neural Networks and Tensor Decomposition“

M. Mondelli, A. Montanari

AISTATS, 2019